Prof. Jung inducted into the IEEE HPCA Hall of Fame

01.24.2026

Professor Jung has been inducted into the IEEE HPCA Hall of Fame.

HPCA (The International Symposium on High-Performance Computer Architecture) is one of the leading international conferences in computer architecture, focusing on advances in high-performance computing systems. The HPCA Hall of Fame recognizes researchers who have made sustained contributions to the field by publishing eight or more papers at the conference. Prof. Jung was selected for this honor following the publication of his recent paper, “AutoGNN: End-to-End Hardware-Driven Graph Preprocessing for Enhanced GNN Performance.”

Having previously been inducted into the IEEE/ACM ISCA Hall of Fame in 2025, Professor Jung has now been recognized by the Hall of Fame of two major top-tier conferences in computer architecture.

Professor Jung leads the Computer Architecture and Memory/Storage Systems Laboratory (CAMELab) and has conducted long-term research in interconnect technologies and memory/storage systems. He has authored a total of 145 papers published at major international conferences, including SOSP, OSDI, ISCA, MICRO, ASPLOS, HPCA, ATC, FAST, and SC. In 2022, he founded Panmnesia, a faculty startup developing link solutions to improve the efficiency of AI data centers, contributing to both academic and industrial research. In recognition of his continued contributions to science and technology, he was also selected as the first recipient of the 2026 Korea Science and Technology Award.

Check more: [ KAIST EE Announcement ]

Prof. Jung named the first recipient of the Korea Science and Technology Award in 2026!

01.08.2026

We are pleased to share that Prof. Jung named the first recipient of the Korea Science and Technology Award in 2026!

The Korea Science and Technology Award is presented monthly by the Minister of Science and ICT to a researcher who has made significant contributions to the advancement of science and technology through research achievements over the past three years. Prof. Jung was recognized for his work on modular AI data center architectures based on link and memory technologies. It addresses the limitations of fixed compute and memory configurations in large-scale AI systems by enabling flexible disaggregation and composition of resources using the CXL interconnect, improving both cost efficiency and operational efficiency.

In addition, Prof. Jung also proposed system architectures that integrate accelerator-centric interconnect technologies such as UALink and NVLink, along with high-bandwidth memory (HBM), into the modular AI data center designs. These designs were documented in technical reports and have received broad attention from both academia and industry.

Seungkwan/Seungjun's work (AutoGNN) has been accepted from HPCA 2026

11.08.2025

Graph Neural Networks (GNNs) enable learning on graph-structured data by coupling node representation learning with message passing over edges, thereby facilitating scalable modeling of complex relational structures. This paradigm becomes particularly critical with the emergence of massive real-world graphs—such as social networks, recommendation graphs, and knowledge graphs—which contain billions of nodes and edges but suffer from highly irregular connectivity and skewed degree distributions compared to grid-like data used in CNNs. Although these large-scale graphs promise richer context and more expressive representations, their potential is severely hindered by the inherent cost of neighborhood expansion and feature access during GNN training and inference. In particular, naïve full-graph or full-neighborhood aggregation can incur orders-of-magnitude overheads in memory traffic and latency, as high-degree nodes trigger expensive fan-out, substantially degrading hardware utilization and end-to-end performance. To effectively utilize GNNs on large graphs, it is essential to move away from conventional data pipelines toward architectures optimized for graph-centric preprocessing which can expose explicit parallelism.

We introduce AutoGNN, a fully automated FPGA-based accelerator that performs end-to-end GNN preprocessing directly on hardware. We redesign GNN preprocessing with set-partitioning and set-counting running by two hardware fundamentals, unified processing elements (UPEs) and single-cycle reducers (SCRs). UPE accelerates edge sorting and unique vertex selection through prefix sum and repositioning logic, while SCR parallelizes data reshaping and subgraph reindexing using comparators and adder trees. Furthermore, we introduce a dynamic reconstruction framework that optimizes hardware settings in real-time using pre-compiled bitstreams based on graph size and structure. To ensure practicality, we develop a lightweight cost model that integrates AutoGNN into the deep graph library (DGL), ensuring full software compatibility and automatically balancing performance and resource efficiency. Implemented on a 7nm Xilinx FPGA, we demonstrate that AutoGNN eliminates preprocessing bottlenecks and enables scalable and energy-efficient GNN inference by achieving up to 9.0x end-to-end speedup and 19.7x lower power consumption.

Congratulations, everyone!

Prof. Jung received Presidential Commendation Award

10.29.2025

Professor Jung has received the Presidential Commendation Award. Specifically, Prof. Jung has received the Presidential Commendation at the 2025 K-Tech Inside Show (Materials, Parts, and Equipment & Core Technology Exhibition) held at KINTEX in Goyang. The event was hosted by the Ministry of Trade, Industry and Energy and organized by multiple institutions including the Korea Evaluation Institute of Industrial Technology.

The commendation acknowledges his contribution to developing PanLink, a comprehensive interconnect technology that supports various links including CXL, UALink (Ultra Accelerator Link), NVLink Fusion, Ethernet, and PCIe. Earlier this year, Professor Jung published a technical white paper titled “Compute Can't Handle the Truth: Why Communication Tax Prioritizes Memory and Interconnects in Modern AI Infrastructure,” introducing a hybrid link architecture using CXL to overcome the scalability limitations of existing accelerator-centric interconnects such as UALink and NVLink. Professor Jung has also been actively involved in international standardization efforts through organizations including the CXL Consortium, UALink Consortium, PCI-SIG, and the Open Compute Project.

This recognition marks a meaningful achievement resulting from Professor Jung’s dedication and continued research efforts, shared with the many collaborators who have worked alongside him.

CAMEL held a seminar and invited three researchers from the SAFARI Research Group at ETH Zürich

10.23.2025

We invited Nisa Bostanci, İsmail Emir Yüksel, Nika Mansouri Ghiasi to a seminar at the CAMEL and had a great time of academic exchange. Nisa Bostanci gave a presentation titled ‘Emerging Covert Channels and Side Channels in RowHammer Defenses and Processing-in-Memory Architectures’. Memory is a central component of modern computing systems, continuously evolving to address robustness challenges . In this talk, she investigate the timing side-channel and covert-channel vulnerabilities in current and future DRAM-based systems that emerge with the adoption of read-disturbance defenses and with the paradigm shift towards memory-centric computing using Processing-In-Memory (PIM) architectures.

İsmail Emir Yüksel gave a presentation titled ‘Storage-Centric Systems for Genomics and Metagenomics’. In this talk, he experimentally demonstrate and analyze 1) the computational capabilities of real DRAM chips and 2) the robustness issues caused by in-DRAM operations, using over 300 real DDR4 DRAM chips. Our extensive characterization shows that commodity DRAM chips, without any modification to the chip itself, are able to execute bulk-bitwise computation and data movement operations in a reasonably robust manner.

Nika Mansouri Ghiasi gave a presentation titled ‘Understanding the Computational Capabilities of Real DRAM Chips and Robustness Issues They Introduce’. Her research interests are in computer architecture and computational biology, focusing on 1) storage systems, large-scale bioinformatics applications, and their interactions, and 2) emerging technologies such as ultra-dense 3D integrated systems. She is interested in designing high-performance, energy-efficient, and low-cost systems that facilitate the widespread adoption of data-intensive applications needed in healthcare and life sciences.

Prof. Jung received Minister of Trade, Industry and Energy Award

10.21.2025

Professor Myungsoo Jung, KAIST Endowed Chair Professor in our department, has received the Industry and Energy Award. Specifically, Professor Jung received the Minister of Trade, Industry and Energy Award at the 20th Electronics and IT Day event held at COEX in Seoul. The event was hosted by the Ministry of Trade, Industry and Energy and organized by the Korea Electronics Association.

The award recognizes his contribution to the development of Compute Express Link (CXL), a next-generation interconnect technology. Professor Jung and his research team have been working on related technologies since 2015. In 2022, they presented the world’s first full-system framework incorporating a CXL switch at the USENIX Annual Technical Conference. Later, he founded the faculty startup Panmnesia, which developed the world’s first CXL controller IP achieving double-digit nanosecond round-trip latency, as well as a fabric switch supporting the CXL 3.2 and PCIe 6.0 standards.

This recognition marks a meaningful achievement resulting from Professor Jung’s dedication and continued research efforts, shared with the many collaborators who have worked alongside him.

Seungjun Lee, Huiwon Choi, and Hyein Woo’s Work Presented at MICRO’25 SPICE and DoSSA Workshops

10.19.2025

We are delighted to announce that three of our recent works have been presented at the MICRO’25 Workshops — SPICE and DoSSA.

Seungjun Lee presented his paper “Zero-Overhead Sparsity Prediction for Dynamic Algorithm Selection in Deep Learning Models” at the SPICE Workshop. Exploiting the natural sparsity in CNN models is theoretically known to reduce computational overhead by more than 10×. However, as sparsity hanges continuously during training, the optimal method for skipping zero-valued multiplications also changes. Existing approaches measure the input matrix’s sparsity to select an optimal computational approach, but this measurement can be as time-consuming as the matrix multiplication itself on modern GPUs. This work introduces a zero-overhead sparsity prediction framework that predicts the sparsity of the input matrix instead of measuring it. The key insight is to leverage the statistical properties of normalization layers, which are essential in modern neural networks for stable training. Since these layers fix the mean and variance of their output distribution, we can mathematically derive the expected sparsity resulting from subsequent operations such as ReLU activations.

Hyein Woo presented his paper “MPI-over-CXL: Enhancing Communication Efficiency in Distributed HPC Systems” at the SPICE Workshop. MPI implementations commonly rely on explicit memory-copy operations, incurring overhead from redundant data movement and buffer management. This overhead notably impacts HPC workloads involving intensive inter-processor communication. In response, we introduce MPI-over-CXL, a novel MPI communication paradigm leveraging CXL, which provides cache-coherent shared memory across multiple hosts. MPI-over-CXL replaces traditional data-copy methods with direct shared memory access, significantly reducing communication latency and memory bandwidth usage. By mapping shared memory regions directly into the virtual address spaces of MPI processes, enabling pointer-based communication and eliminating redundant copies. The team developed both hardware and software environment, including a custom CXL 3.2 controller, FPGA-based multi-host emulation, and dedicated software stack. Our evaluations using representative benchmarks demonstrate substantial performance improvements over conventional MPI systems, underscoring MPI-over-CXL’s potential to enhance efficiency and scalability in large-scale HPC environments.

Huiwon Choi presented his paper “Memoization: Accelerating MCD BNN by Attribution-Based Dynamic Precision Scaling” at the DoSSA Workshop. Monte-Carlo Dropout Bayesian Neural Network (MCD BNN) measures the uncertainty of inference result, thereby ensuring safer deployment of neural networks in real-world applications. However, since MCD BNN requires a large number of stochastic forward passes per inference, it suffers from significantly higher inference overhead compared with conventional neural network. To mitigate this, Memoization introduces a hardware–algorithm co-design method composed of 1) an Attribution-based Dynamic Approximation algorithm and 2) a domain-specific hardware accelerator tailored for the algorithm. This cooptimization enables fast, energy-efficient and accurate MCD BNN inference. To the best of our knowledge, Memoization is the first to compute input-specific attribution at inference time for estimating neuron importance.

Congratulations to Seungjun, Huiwon, and Hyein for these outstanding achievements!

Seungkwan Kang and Huiwon Choi’s Work Presented at SOSP'25 BigMem and PACMI Workshops

10.13.2025

We are delighted to announce that two of our recent works have been presented at the SOSP Workshops — BigMem and PACMI.

Seungkwan Kang presented his paper “Mitigating Heat-induced Performance Cliffs of SSDs via OS-level Thermal-aware I/O Throttling” at the SOSP BigMem Workshop. This work addresses the critical issue of thermal-induced performance degradation in NAND flash-based SSDs. While modern SSDs leverage massive internal parallelism for high performance, their reliability deteriorates under high thermal stress, which accelerates charge loss and worsens data retention. Existing SSDs rely on simple firmware-level throttling using dynamic voltage and frequency scaling (DVFS), which often causes severe and long-lasting performance drops—referred to as thermal cliffs. To overcome these challenges, the authors propose the Linux Thermal-aware Throttler (LTT), an OS-level framework that continuously monitors and learns the SSDs’ thermal behavior and proactively regulates host I/O submission rates before the devices reach their critical temperatures.

Huiwon Choi presented “Bridging Natural Resilience and Cost-Effectiveness in SSDs for Containerized ML Applications” at the SOSP PACMI Workshop. This study introduces NatureSSD, a novel approach to flash storage allocation that capitalizes on the inherent natural resilience found in modern machine learning applications. NatureSSD is built upon a set of QoS-aware SSD resource allocation algorithms designed to meet the dual objectives of storage latency and longevity as specified by the host. Recognizing that the current Docker technology overlooks these specific requirements, our contribution extends to enhancing both the Linux kernel and Docker stacks. This enables applications within a multi-tenant container environment to specify their unique storage-level QoS requirements in terms of both reliability and latency. Our comprehensive evaluation illustrates that NatureSSD significantly prolongs the operational lifespan of conventional SSD technologies while surpassing the performance expectations set on a per-container basis.

Congratulations to Seungkwan, Huiwon for these impressive achievements!

Prof. Jung received Minister of Science and ICT Award

09.10.2025

Professor Myoungsoo Jung, a KAIST Endowed Chair Professor, has received the Minister of Science and ICT Award. Specifically, Professor Jung received the award at the 2025 Korea Innovative Startup Awards, held during SNK 2025 (Startup Nation Korea Global Forum). The event was jointly organized by Seoul National University and KAIST, with sponsorship from nine government agencies, including the Ministry of Science and ICT, the Ministry of Trade, Industry and Energy, and the Ministry of SMEs and Startups.

The award recognizes his leadership in AI infrastructure technology development through his faculty startup, Panmnesia, which provides link solutions for AI infrastructure — including the world’s first CXL controller IP achieving double-digit nanosecond round-trip latency, as well as a fabric switch supporting the CXL 3.2 and PCIe 6.0 standards.

This recognition marks a meaningful achievement resulting from Professor Jung’s dedication and continued research efforts, shared with the many collaborators who have worked alongside him.

Dongsuk/Miryeong's work (CXL Topology-Aware SSD) has been accepted from IEEE Micro 2025

05.21.2025

CXL enables memory disaggregation by decoupling memory resources from compute servers, facilitating scalable access to extensive memory capacities. This approach becomes particularly relevant with the emergence of storage class memory (SCM) technologies such as PRAM, Z-NAND, and XL-Flash, which provide higher memory densities but suffer from significantly greater latency compared to DRAM. Although these SCM-based, byte-addressable SSDs leveraging the CXL protocol promise enhanced memory scalability, their potential is hindered by inherent latency challenges. Specifically, SCM technologies exhibit up to 7× (PRAM) to 30× (Z-NAND, XL-Flash) higher latencies compared to DRAM, impacting application performance substantially. Traditional SSD designs mitigate write latency using internal DRAM buffers but inadequately address SCM's high read latencies. To effectively utilize CXL-SSDs, it is essential to shift away from conventional storage-oriented SSD designs towards architectures optimized for memory-centric operations. Current CPU-side cache prefetchers, constrained by hardware logic size and latency variations arising from complex multi-level CXL switch topologies, cannot fully exploit LLC performance when accessing data distributed across a CXL memory pool.

We propose ExPAND, an expander-driven CXL prefetcher designed specifically for CXL-SSDs, to overcome these limitations. ExPAND offloads prefetching responsibilities from the host CPU to CXL-SSDs, employing a heterogeneous machine learning model for accurate address prediction across multiple expander nodes. Host-side logic communicates execution semantics to CXL-SSDs, while SSD-side logic maintains cache coherence using CXL.mem’s back-invalidation mechanism. Additionally, ExPAND leverages detailed network topology data obtained during PCIe enumeration to precisely estimate latency, optimizing prefetch timing. Our evaluations demonstrate that ExPAND significantly outperforms traditional approaches, delivering performance improvements of 9.0× for graph applications and 14.7× for SPEC CPU benchmarks.

Congratulations, everyone!

Donghyun/Miryeong work (Containerized ISP and SSD Disaggregation) has been accepted from IEEE Micro 2025

05.21.2025

ISP minimizes data transfer for analytics but faces significant challenges in adaptation, compatibility, and resource disaggregation. We propose DockerSSD, an adaptable in-storage processing (ISP) model leveraging OS-level virtualization within SSDs, enabling containerized data processing directly at storage devices without requiring application-level or source-code modifications. DockerSSD addresses critical integration challenges by combining a novel communication method and lightweight firmware, specifically designed for seamless container execution and efficient ISP management. DockerSSD employs Ethernet over NVMe (Ether-oN), a kernel-level driver facilitating network-based ISP management and direct Ethernet communication between hosts and SSDs. Ether-oN introduces asynchronous upcalls and packet overriding within the NVMe protocol, thus supporting Docker-compatible command-line interfaces for real-time monitoring and control. As it does not alter existing interfaces, Ether-oN enables concurrent network and block I/O operations, significantly enhancing responsiveness and simplifying ISP interactions.

To efficiently execute containerized workloads, DockerSSD introduces Virtual Firmware (Virtual-FW), a minimal firmware stack embedding essential OS functionalities directly within SSDs. Virtual-FW dynamically constructs ISP-containers from standard Docker images, executing them via system-call emulation at bare-metal performance levels. This approach ensures secure execution through robust NVMe namespace and memory isolation mechanisms, safeguarding SSD resources from unauthorized access and execution overhead. Using Ether-oN and Virtual-FW, DockerSSD creates a computing-enabled, disaggregated storage pool that significantly reduces host computational overhead and enables large-scale distributed data processing. Evaluation results show DockerSSD achieves up to 2.0× performance improvement on I/O-intensive tasks and an average 7.9× acceleration in distributed large language model (LLM) inference.

Congratulations, all!

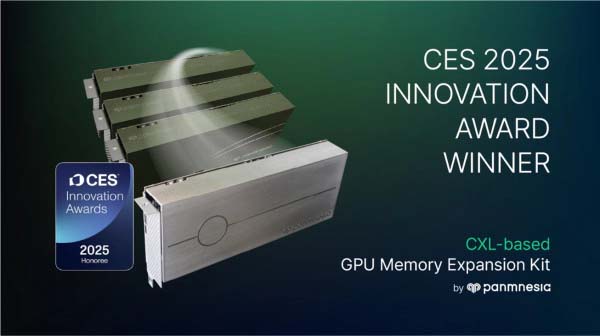

Prof. Jung's team wins CES 2025 Innovation Award for solution to minimize AI infrastructure Costs by replacing redundant GPUs into memory expanders

01.15.2025

At CES 2025, Prof. Jung's team was honored with the prestigious CES Innovation Award for its CXL-Based GPU Memory Expansion Kit, marking its second consecutive win at the global event. The CES Innovation Award is given by the CTA(Consumer Technology Association) to products with excellent technology and innovation among global innovation products. A panel of over 100 industry experts meticulously evaluates the entries based on their technicality, functionality and originality as well as the potential benefits these technologies can provide to humanity.

Large-scale AI services, such as generative AI, have become an integral part of modern life. However, these applications often require memory capacities exceeding several terabytes (10^12 bytes), far surpassing the memory capacity of GPU devices, which is about tens of gigabytes (10^9 bytes). To address this gap, server operators often deploy numerous GPUs in their AI infrastructure. While this approach helps meet memory demands, it comes with significant cost burdens, making it a less feasible solution for many organizations.

Prof. Jung's team has developed a solution leveraging its proprietary CXL IP to address this challenge. Users can deploy GPUs based on their computational workload needs. If additional memory capacity is needed, they can simply attach a CXL-Memory Expanders (which is highly memory-intensive), instead of adding more GPUs. This approach allows users to replace redundant GPUs used for traditional GPU memory expansion into Memory Expanders, thereby minimizing waste of computational resources and significantly reducing AI infrastructure costs.

Congratulations, everyone!

Prof. Jung received the digital innovation award with a commendation from the Minister of Science and ICT

12.02.2024

Professor Jung received the commendation from the Minister of Science and ICT at the 2024 Digital Innovation Award ceremony, held on November 15. This prestigious award recognizes his significant contributions to the development of CXL (Compute Express Link), a next-generation interconnect technology, his efforts to enhance South Korea’s semiconductor competitiveness, and his commitment to fostering young engineers through the establishment of the KAIST startup Panmnesia. For over a decade, Professor Jung has been at the forefront of research in storage and memory technologies. To date, he has presented 131 papers at international conferences, including top-tier conferences such as ISCA, MICRO, ASPLOS, SOSP, OSDI, HPC, ATC, FAST, and SC. Especially, his pioneering works on CXL-based memory expansion has positioned him as a leader in both research and technological advancement. With Panmnesia, the KAIST startup he established, Professor Jung introduced the wrold’s first full system framework with CXL 2.0 switches at the 2022 USENIX Annual Technical Conference. He also solidified the leadership in CXL technology by unveiling the world’s first system that includes all types of CXL 3.0/3.1 components at the 2023 SC Exhibition. Recently, he presented the world’s first double-digit nanosecond latency CXL IP, and received the CES Innovation Awards two years in a row with his CXL-based solutions for AI.

A Collaboration work for ZNS-based All-Flash Array Engine has been accepted from SOSP'24

08.07.2024

All-flash array (AFA) has become one of the most popular storage forms in diverse computing domains. While traditional AFA design adopts a block interface to seamlessly integrate with most existing software, this interface hinders the host from managing SSD internal tasks explicitly, which results in both short endurance and poor performance. In contrast, ZNS AFA, such as RAIZN, adopts ZNS SSDs and exposes the ZNS interface to the users. This solution attempts to upward the responsibility of SSD management. Unfortunately, it faces severe compatibility issues as most upper-layer software only takes block I/O accesses for granted.

In this work, we propose BIZA, a self-governing block-interface ZNS AFA to proactively schedule I/O requests and SSD internal tasks via the ZNS interface while exposing the user-friendly block interface to upper-layer software. BIZA achieves both long endurance and high performance by exploiting the zone random write area (ZRWA) and internal parallelisms of ZNS SSDs. Specifically, to mitigate write amplification, BIZA proposes a ghost-cache-based algorithm to identify hot data and absorb their updates in the scarce ZRWA. BIZA also employs a novel guess-and-verify mechanism to detect the mappings between zones and I/O resources at a low cost. Thereafter, BIZA can serve write requests and internal tasks in parallel with our customized I/O scheduler for high throughput and low latency. The evaluation results show that BIZA can reduce write amplification by 42.7% while achieving 93.2% higher throughput and 62.8% lower tail latency, compared to the state-of-the-art AFA solutions. The acceptance rate of SOSP this year is 17%! Congratulations!!

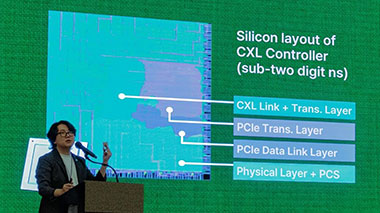

Keynote for Tomorrow AI Datacenter with CXL

07.16.2024

The Compute Express Link (CXL) has recently gained attention in the technology community due to its promising capabilities in managing hardware heterogeneity and streamlining resource disaggregation. While there are currently no commercial products or platforms that integrate CXL into memory pooling, it is expected to substantially enhance memory resource disaggregation in the near future. In this talk, we will provide an overview of CXL, explaining its importance and discussing the current state of the industry through the lens of a real-world example.

This talk will include our silicon proven CXL controller and full hardware IP stack, as well as several emerging CXL-enabled applications integrated with Panmnesia’s CXL solutions such as CXL-GPU and CXL-augmented AI systems.

Specifically, during the presentation, we will delve into the reasons behind the need for a new interface for cache coherence in existing computing and memory resources. We will also explore how CXL IPs can play a pivotal role in integrating various types of resources into a disaggregated pool. To better illustrate this concept, we will discuss two real-world system examples: the first, several CXL end-to-end systems that establishes a direct connection between a host processor complex and remote memory resources using CXL’s switch (2.0) and fabric (3.1) memory protocol; the second, a prototype storage expansion system that incorporates CXL technology for enhanced performance. In the final segment of our talk, we will briefly introduce an array of hardware prototypes or a CXL-enabled GPU system specifically designed to support future CXL systems. These prototypes are part of our ongoing project aimed at advancing the development and adoption of CXL technology in the industry.

One of CAMEL's collaboration work (User-Space All-Flash Array Engine) has been accepted from ATC'24

05.01.2024

All-flash array (AFA) is a popular approach to aggregate the capacity of multiple solid-state drives (SSDs) while guaranteeing fault tolerance. Unfortunately, existing AFA engines inflict substantial software overheads on the I/O path, such as the user-kernel context switches and AFA internal tasks (e.g., parity preparation), thereby failing to adopt next-generation high-performance SSDs.

Tackling this challenge, we propose ScalaAFA, a unique holistic design of AFA engine that can extend the throughput of next-generation SSD arrays in scale with low CPU costs. We incorporate ScalaAFA into user space to avoid user-kernel context switches while harnessing SSD built-in resources for handling AFA internal tasks. Specifically, in adherence to the lock-free principle of existing user-space storage framework, ScalaAFA substitutes the traditional locks with an efficient message-passing-based permission management scheme to facilitate inter-thread synchronization. Considering the CPU burden imposed by background I/O and parity computation, ScalaAFA proposes to offload these tasks to SSDs. To mitigate host-SSD communication overheads in offloading, ScalaAFA takes a novel data placement policy that enables transparent data gathering and in-situ parity computation. ScalaAFA also addresses two AFA intrinsic issues, metadata persistence and write amplification, by thoroughly exploiting SSD architectural innovations. Comprehensive evaluation results indicate that ScalaAFA can achieve 2.5x write throughput and reduce average write latency by a significant 52.7%, compared to the state-of-the-art AFA engines. The acceptance rate of USENIX ATC this year is 15.8%! Congratulations! See you San Jose soon!

Large-Scale Cross-Silo Federated Learning Aggregation work has been accepted from ISCA'24

04.08.2024

Cross-silo federated learning (FL) leverages homomorphic encryption (HE) to obscure the model updates from the clients. However, HE poses the challenges of complex cryptographic computations and inflated ciphertext sizes. As cross-silo FL scales to accommodate larger models and more clients, the overheads of HE can overwhelm a CPU-centric aggregator architecture, including excessive network traffic, enormous data volume, intricate computations, and redundant data movements. Tackling these issues, we propose Flagger, an efficient and high-performance FL aggregator. Flagger meticulously integrates the data processing unit (DPU) with computational storage drives (CSD), employing these two distinct near-data processing (NDP) accelerators as a holistic architecture to collaboratively enhance FL aggregation. With the delicate delegation of complex FL aggregation tasks, we build Flagger-DPU and Flagger-CSD to exploit both in-network and in-storage HE acceleration to streamline FL aggregation. We also implement Flagger-Runtime, a dedicated software layer, to coordinate NDP accelerators and enable direct peer-to-peer data exchanges, markedly reducing data migration burdens. Our evaluation results reveal that Flagger expedites the aggregation in FL training iterations by 436% on average, compared with traditional CPU-centric aggregators.

Prof. Myoungsoo Jung joins the ISCA hall of fame from this this year, and this year ISCA's acceptance rate is 19.6%. Congratulations!

Check more: [ KAIST EE Announcement ]

Dr. Miryeong Kwon has been honored with the prestigious KAIST Outstanding PhD Dissertation Award

04.01.2024

We are delighted to announce that Dr. Miryeong Kwon has been honored with the prestigious KAIST Outstanding PhD Dissertation Award (최우수 박사졸업논문 총장상), a recognition bestowed upon the highest-ranking Ph.D. candidate across all departments within KAIST. Dr. Kwon's academic portfolio is impressive, encompassing 40 publications that span critical advancements in high-performance storage/flash technologies and CXL cache coherent interconnect technologies.

Her contributions extend far beyond her remarkable scholarly achievements. Dr. Kwon embodies a set of exemplary characteristics that have positively influenced our team dynamics, fostering a collaborative and joyful environment within CAMEL. Her presence and leadership have catalyzed significant growth and success in our lab, and her unwavering dedication and care have been instrumental in our collective accomplishments.

Dr. Kwon's journey through her Ph.D. has set a benchmark that transcends the conventional expectations of doctoral research. Her capacity to blend high-caliber research with genuine, positive team interactions is a testament to her comprehensive excellence.

As CAMEL continues to flourish, we attribute a considerable portion of our progress to Dr. Kwon's efforts. Her trajectory in academia and beyond holds immense promise, and we are confident that her future endeavors will lead to outstanding contributions to any team or project she chooses to engage with. Our heartfelt congratulations to Dr. Kwon, along with our anticipation for her continued success, reflecting our belief in her potential to set new standards of excellence across various domains. Congratulations!

Prof. Jung's team wins CES 2024 Innovation Award for CXL Technology on AI Accelerator

11.22.2023

Prof. Jung's team announced that they have been bestowed with the CES 2024 Innovation Award for its pioneering CXL-enabled AI accelerator. This recognition came ahead of CES 2024, the world's premier technology event, set to unfold in Las Vegas.

The award-winning accelerator features virtually limitless memory capacity that brings significant performance boost for large-scale AI-driven services. This innovative feature empowers AI-driven services to handle increasing amount of data, leading to significant enhancements in accuracy and quality of the services. Unlike conventional AI accelerators constrained by their limited memory capacity, our accelerator shatters this barrier, enabling AI services to operate at an unprecedented level of sophistication. This holds a great potential for the AI industry, where the accuracy and quality of AI-driven services are a paramount of success in the competitive landscape.

By combining CXL technology with specialized hardware, the accelerator has achieved a 101x speedup over existing solutions that utilize SSDs to accommodate large volumes of data. Additionally, the team emphasized that the accelerator offers significant operating expenses savings for the system, as their specialized hardware reduces energy consumption for data movement and calculation.

Congratulations, everyone!

Donghyun and Miryeong's SSD Virtualization Research Gains Acceptance at HPCA'24

10.23.2023

Donghyun and Miryeong's groundbreaking work on computational SSD virtualization has received recognition with its acceptance by HPCA'24, held in Edinburgh, Scotland. The concept of processing data directly within storage presents an energy-efficient approach to handle vast datasets. Yet, the practical implementation of this renowned task-offloading model in real-world systems has encountered challenges. These hurdles are primarily attributed to suboptimal performance and numerous practical obstacles, encompassing limited processing prowess and heightened vulnerabilities at the storage level.

In our research, we introduce DockerSSD, a novel in-storage processing (ISP) model. This model is characterized by its ability to seamlessly run a diverse range of applications proximate to flash storage without necessitating modifications at the source level. DockerSSD pioneers lightweight OS-level virtualization within contemporary SSDs. This innovation ensures the intelligent functioning of storage in sync with the prevailing computing ecosystem, further enhancing the speed of ISP. In lieu of crafting a unique, vendor-specific ISP for offloading purposes, DockerSSD leverages existing Docker images. It establishes containers as autonomous execution entities within storage, facilitating real-time data processing at its source. To realize this, we engineered a fresh communication approach and virtual firmware. These elements collaboratively facilitate Docker image downloads and manage container operations, all while preserving the integrity of the current storage interface and runtime. Our design also incorporates automated container-associated network and I/O data pathways on hardware, optimizing ISP speeds and minimizing latency. Our empirical evaluations reveal DockerSSD's superior performance, clocking in at 2.0× faster speeds compared to conventional ISP models, while consuming 1.6× and 2.3× less power and energy, respectively.

It is noteworthy to mention that this year's paper acceptance rate for HPCA stands at a mere 18% — a significant decrease from previous years. A heartfelt congratulations to Donghyun and Miryeong on this remarkable achievement!

CAMEL has been selected for 2023 Samsung Future Technology Development Program: Venturing Deeper into Hyperscale AI

10.16.2023

We have been selected to participate in Samsung's Future Technology Development Program. This selection comes with funding for our research, entitled "Software-Hardware Co-Design for Dynamic Acceleration in Sparsity-aware Hyperscale AI." In recent years, Hyperscale AI models like MoE, autoencoders, and multimodal learning have seen increasing adoption, particularly in extensive model-driven applications such as ChatGPT. Recognizing that the computational characteristics of these models often change throughout the training process, we have proposed a variety of acceleration techniques. These techniques combine innovative software technologies, specialized algorithms, and hardware accelerator configurations. A significant insight has been the limitation of current training platforms in adjusting for variable data sparsity and computational dynamics across model layers, which inhibits effective adaptive acceleration. To remedy this, we have developed a dynamic acceleration method that can identify and adapt to shifts in computational traits in real-time. This research has potential implications not only for Hyperscale AI but also for the broader field of deep learning and the emerging services industry. Our objectives include the development of practical hardware models and the provision of open-source software solutions.

Since its inception in 2013, Samsung's Future Technology Development Program has invested KRW 1.5 trillion to foster technological innovation crucial for future societal advancements. The program has consistently supported initiatives in foundational science, innovative materials, and ICT, favoring particularly those that are high-risk but promise substantial returns. We have collaborated with Samsung FTDP foundation since 2021 on a project focused on accelerating Graph Neural Networks (GNNs). As we delve deeper into the Hyperscale AI landscape, we extend our gratitude for this opportunity and look forward to impactful contributions.

Distinguished Lecture on CXL Technology Hosted by Peking University

09.20.2023

We are thrilled to announce an upcoming distinguished lecture that promises to be a seminal event in the realm of Electrical and Computer Engineering. Hosted by Peking University, the lecture has garnered interest from top-tier institutions across China, including but not limited to Tsinghua University, Huazhong University of Science and Technology, Xiamen University, Sun Yat-sen University, Shanghai Jiao Tong University, and the Chinese Academy of Science. The talk, entitled "Revolutionizing Data Centers: The Role of CXL Technology," aims to shed light on the advancements and implications of Compute Express Link (CXL) in the contemporary computing landscape.

The distinguished lecture will be held on a date and at a venue to be announced, and it will be available in both virtual and in-person formats. The talk is not just another technical presentation; it seeks to engage its audience in a nuanced discussion about the sweeping changes that CXL technology is bringing to the world of computing. CXL is a cutting-edge communication protocol that has been designed to streamline interactions between central processing units and specialized computing units like field-programmable gate arrays and graphics processing units. Its role in enhancing the efficiency of data centers and accelerating the pace of advancements in sectors such as artificial intelligence and machine learning cannot be overstated. One of the most compelling aspects of this event is the breadth of its target audience. The lecture is meticulously designed to cater to a wide spectrum of professionals and academicians. Whether you are a hardware engineer, a software developer, a research scientist, a data center operator, or even a student in a related field, the insights offered in this lecture are poised to be invaluable. Beyond merely outlining the technical features and benefits of CXL technology, the speaker will also focus on its real-world applications and its potential to drive future innovations.

In summary, this distinguished lecture offers a rare and comprehensive overview of CXL technology, positioned within both a technical and practical framework. It aims to bring together the brightest minds in the field for a discourse that could very well shape the future of next-generation computing technologies. We earnestly look forward to your participation.

Breaking Down CXL: Why It's Crucial and What's Next

07.22.2023

At SIGARCH'23, we had the opportunity to deliver an invited talk titled "Breaking Down CXL: Why It's Crucial and What's Next." During this presentation, we extensively dissected the ins and outs of Compute Express Link (CXL) technology, highlighting its relevance and the present status of its advancement. CXL is a potent interconnect standard offering high-speed, low-latency communication between CPUs and diverse accelerators such as FPGAs and GPUs. It allows these devices to share memory space and maintain cache coherence, resulting in swift data access and efficient processing of intricate tasks. Our exploration of CXL began from its genesis, the CXL 1.0 specification, and continued through its successive iterations, including the recently launched CXL 3.0. We emphasized the unique features of each iteration, differences among them, and the advantages of CXL compared to other interconnect standards. Beyond just the technicalities of CXL, we also navigated its real-world impact. We specifically addressed how CXL is deployed in large-scale data centers and its role in fostering innovation in areas like artificial intelligence and machine learning. Overall, this talk served as a platform to share a thorough understanding of CXL technology, from its fundamental principles to its practical applications.

Memory Pooling over CXL and Go Beyond with AI

07.04.2023

We presented an insightful talk at this year's Open Compute Project (OCP) Regional Summit. The presentation, titled "Memory Pooling over CXL and Go Beyond with AI," introduced the concept of Compute Express Link (CXL) with a focus on AI applications. Hosted at the Seoul Convention and Exhibition Center (COEX) and Samsung, the OCP 2023 Regional Summit convened key players from the server, CPU, network, and memory sectors. We were at the forefront, elucidating the technique of Memory Pooling over CXL designed to enhance memory utilization and system performance. Our talk, in alignment with OCP's mission of "Empowering Open", offered fresh insights to help organizations leverage the benefits of open technologies and drive innovation in their fields. For those who missed the live presentation, the session will be available on the OCP's event website. Please check the below for more detailed information.

Check more: [ Info ]

Unleashing the Potential of CXL: Our Exploration and Discoveries

05.25.2023

Recently, we were privileged to receive an invitation from the distinguished Professor Byung-Gon Chun, CEO of FriendliAI at Seoul National University. This invite provided us an esteemed platform to present our novel work on the leading-edge Compute Express Link (CXL) technology. During our exposition, we started off by illustrating a unique software-hardware integrated system aimed at boosting search capabilities for approximate nearest neighbors. This avant-garde solution efficiently utilizes CXL to segregate the memory from host resources, thereby optimizing system efficiency. One of the critical features of CXL is its distant memory characteristics. Despite this, we designed our system to enhance search performance remarkably. We did this by adopting innovative strategies and fully utilizing all accessible hardware. This new approach has shown superior performance in terms of query latency when compared to existing platforms, confirming its efficacy.

In the next part of our discourse, we introduced a resilient system specifically architected for managing voluminous recommendation datasets. We utilized the versatility of CXL to flawlessly amalgamate persistent memory and graphics processing units into a cache-coherent domain. This amalgamation allows the graphics processing units to directly access the memory, thereby negating the need for software intervention. Moreover, this system adopts an advanced checkpointing technique to sequentially update model parameters and embeddings across various training batches. This sophisticated methodology has significantly elevated the training performance and notably reduced energy consumption, thus augmenting system efficiency. As we progress on our journey, we are enthusiastic about encountering more such opportunities to share our expertise and discoveries with the wider tech community. We are perpetually pushing the boundaries, exploring the realms of possibility with state-of-the-art technologies like CXL. Stay connected for more updates on our future endeavors as we continue this exhilarating expedition of innovation and discovery.

Miryeong and Sangwon's expander-driven prefetcher for CXL-SSD has been accepted by HotStorage'23

05.20.2023

We are thrilled to announce that a cutting-edge research paper, penned by Miryeong Kwon and Sangwon Lee, has been accepted at this year's HotStorage conference. We present a groundbreaking solution to a significant challenge in the realm of data storage and memory access. The research centers on the integration of Compute Express Link (CXL) with Solid State Drives (SSDs), a technology capable of scalable access to large memory. However, this capability traditionally comes at a cost, mainly slower speeds compared to Dynamic Random Access Memory (DRAM). To overcome this, we introduce an "expander-driven CXL prefetcher," a novel solution that shifts primary Last Level Cache (LLC) prefetch tasks from the host Central Processing Unit (CPU) to CXL-SSDs. Notably, this approach has been designed with CPU design area constraints in mind, underlining the real-world applicability of this research. Their evaluation results are staggering, revealing that the proposed prefetcher can significantly boost the performance of various graph applications, characterized by their highly irregular memory access patterns. The enhancement reaches up to 2.8 times when compared to a CXL-SSD expanded memory pool without a CXL-prefetcher. This revolutionary research represents a substantial step forward in memory access and storage technology, with the potential to influence various tech industries. We are eager to share their findings with the broader community at this year's HotStorage conference. They welcome fellow researchers, industry experts, and anyone with an interest in this technology, to engage with them during the event. We congratulate Miryeong Kwon and Sangwon Lee on their phenomenal work and eagerly anticipate their presentation at HotStorage. We look forward to seeing the transformative impact their research will undoubtedly have on the future of memory technology. See you at Boston soon!

Junhyeok's work for CXL-augmented ANNS has been accepted by USENIX ATC 2023

04.29.2023

We are thrilled to announce the acceptance of our research paper "CXL-ANNS: A Software-Hardware Collaborative Approach for Scalable Approximate Nearest Neighbor Search" at USENIX ATC, a highly-regarded conference with a rigorous review process and an acceptance rate of only 18%. Our paper proposes a novel approach to approximate nearest neighbor search (ANNS) that leverages the power of both software and hardware to achieve scalability and performance.We utilize compute express link (CXL) technology to separate DRAM from the host resources and place essential datasets into its memory pool, allowing us to handle billion-point graphs without sacrificing accuracy. To address the performance degradation that can occur in CXL systems, our approach caches frequently visited data and prefetches likely data based on graph traversing behaviors of ANNS. Additionally, CXL-ANNS leverages the parallel processing power of the CXL interconnect network to improve search performance even further. The results of our empirical evaluation are impressive, with CXL-ANNS exhibiting 22.9x lower query latency than state-of-the-art ANNS platforms and outperforming an oracle ANNS system with unlimited DRAM storage capacity by 2.9x in terms of latency. We believe that CXL-ANNS will be a game-changer in the field of ANNS, and we are honored to have our work recognized by USENIX ATC. Get ready to revolutionize your ANNS research with CXL-ANNS! See you in Boston, July!

New members of CAMEL present groundbreaking research at Samsung Global Technology Symposium

04.21.2023

Our team was recently invited to present three groundbreaking research projects at the Samsung Global Technology Symposium, an event attended by over 600 engineers and students. These projects, associated with Samsung's innovative social contribution programs (삼성미래기술육성), delved into various aspects of technology with the aim of revolutionizing computation and deep learning. The first project explored a hardware/software co-programmable framework for computational SSDs, while the second examined the use of hardware acceleration to improve preprocessing in graph neural networks. The third project focused on developing a comprehensive GNN-acceleration framework for efficient parallel processing of massive datasets. All of these projects have been published in renowned computer science and engineering conferences and journals. Currently, our team is working on preparing the final framework as a powerful open-source GNN platform, which is set to make its debut at the IPDPS'23 conference in Florida this May. We are proud of our team's accomplishments and look forward to sharing these advancements with the broader scientific and engineering communities.

Revolutionizing Memory Capacity and Processing: Our Latest High-Performance Accelerator and Memory Arrays

03.10.2023

We are excited to announce the release of our latest innovation, the 4th generation high-performance accelerator and memory arrays. With this breakthrough technology, we can now support the world's largest memory capacity while enabling near data processing capability. This development marks a significant milestone in our pursuit of more advanced and practical hardware and system research, specifically in the areas of AI and ML acceleration, cache coherent interconnect, and memory expansion. We welcome individuals who are passionate about pioneering cutting-edge research on computer architecture and operating systems (OS) to join us on this exciting journey.

Opening Talk Invitation to Heterogeneous and Composable Memory Workshop at HPCA'23

02.21.2023

We are delighted to share that our team member (Junhyeok Jang) will be presenting an opening invited talk at the upcoming Heterogeneous and Composable Memory Workshop, to be held in conjunction with HPCA'23 in Montreal, Canada. The talk will showcase our innovative research solution that utilizes the power of CXL 3.0 to efficiently process large-scale recommendation models (RMs) in a disaggregated memory setting while ensuring low overhead and failure tolerance during training. This one-day workshop is set to feature key industry players in the CXL domain such as Microsoft, Intel, and Panmnesia, as well as distinguished academics from KAIST, UCDavis, UMichigan, UToronto, and beyond. We invite you to explore the abstract and program details the workshop website and join us for this exciting event!

Check more: [ Program ]

Emerging CXL Workshop with Leading Industry Experts and Renowned Academics

02.18.2023

We had the honor of participating in a dynamic and engaging CXL workshop, where we joined forces with leading CPU and memory vendors including Intel, AMD, Panmnesia, Samsung, and SK-Hynix. It was an exciting opportunity to engage in high-level discussions regarding the latest trends and innovations in CXL technology, and how these advancements are shaping the future of large-scale data-centric applications. During the workshop, we were presented with valuable insights into the direction that data-centric applications should take, and the ways in which cutting-edge research directions such as near-data processing and AI acceleration are driving innovation in this space. These discussions were both stimulating and thought-provoking, and we are thrilled to have been part of such an insightful event. As we look towards the future, we are excited to continue our involvement with this exciting technology and to be part of future discussions that will shape the development and implementation of CXL technology. The workshop was a true privilege to attend, and we look forward to further engagement in this innovative field.

Invited Talk for Memory Pooling over CXL at Computer System Society 2023

02.07.2023

It was a great time to have an invited talk entitled “Memory Pooling over CXL” at Computer System Society 2023! In the talk, we introduced our history of development and research for CXL and memory expander (2015~2023) with some specific use-cases. We also showed a new type of memory pooling stack and introduced a CXL 3.0 memory expander device reference board we built up from the ground. We are also excited to share more technical details of those and share our vision at a closed CXL working symposium (AMD, Intel, Samsung, and SK-Hynix), this month!

Junhyeok's work for open-source GNN acceleration framework has been accepted by IEEE IPDPS 2023

01.28.2023

We present GraphTensor, a comprehensive open-source framework that supports efficient parallel neural net- work processing on large graphs. GraphTensor offers a set of easy-to-use programming primitives that appreciate both graph and neural network execution behaviors from the beginning (graph sampling) to the end (dense data processing). Our framework runs diverse graph neural network (GNN) models in a destination-centric, feature-wise manner, which can significantly shorten training execution times in a GPU. In addition, GraphTensor rearranges multiple GNN kernels based on their system hyperparameters in a self-governing manner, thereby reducing the processing dimensionality and the latencies further. From the end-to-end execution viewpoint, GraphTensor significantly shortens the service-level GNN latency by applying pipeline parallelism for efficient graph dataset preprocessing. Our evaluation shows that GraphTensor exhibits 1.4× better training performance than emerging GNN frameworks under the execution of large-scale, real-world graph workloads. For the end-to-end services, GraphTensor reduces training latencies of an advanced version of the GNN frameworks (optimized for multi-threaded graph sampling) by 2.4×, on average.

Miryeong's work entitled by failure tolerant training over CXL has been accepted by IEEE Micro 2023

01.08.2023

This paper proposes TrainingCXL that can efficiently process large-scale recommendation datasets in the pool of disaggregated memory while making training fault tolerant with low overhead. To this end, i) we integrate persistent memory (PMEM) and GPU into a cache-coherent domain as Type 2. Enabling CXL allows PMEM to be directly placed in GPU’s memory hierarchy, such that GPU can access PMEM without software intervention. TrainingCXL introduces computing and checkpointing logic near the CXL controller, thereby training data and managing persistency in an active manner. Considering PMEM's vulnerability, ii) we utilize the unique characteristics of recommendation models and take the checkpointing overhead off the critical path of their training. Lastly, iii) TrainingCXL employs an advanced checkpointing technique that relaxes the updating sequence of model parameters and embeddings across training batches. The evaluation shows that TrainingCXL achieves 5.2× training performance improvement and 76% energy savings, compared to the modern PMEM-based recommendation systems. The manuscript will be available soon in IEEE Micro Magazine!

Donghyun's memory pooling with CXL work has been invited and accepted by IEEE Micro 2023

01.07.2023

Compute express link (CXL) has recently attracted great attention thanks to its excellent hardware heterogeneity management and resource disaggregation capabilities. Even though there is yet no commercially available product or platform integrating CXL into memory pooling, it is expected to make memory resources practically and efficiently disaggregated much better than ever before. In this paper, we propose directly accessible memory disaggregation that straight connects a host processor complex and remote memory resources over CXL’s memory protocol (CXL.mem). The manuscript will be available soon in IEEE Micro Magazine!

Keynote for Process-in-Memory and AI-semiconductor

12.13.2022

We gave another keynote regarding "Why CXL?" at Process-in-Memory and AI-semiconductor Strategic Technology Symposium 2022, hosted by two major ministries of Korea. This talk shared several insights as to why CXL can be one of the key technologies in hyper-scale computing and shows the differences between CXL 1.1, 2.0, and 3.0. In contrast to SC’22’s distinguished lecture (where I showed up heterogenous computing over CXL), this keynote focused more on CXL memory expanders and explained the corresponding infrastructure that most memory/controller vendors are unfortunately missing to catch up on nowadays. We open to discuss and welcome to contact for more on the future CXL technologies.

CXL panel meeting with Intel, Microsoft, LBNL, and keynote for SC'22!

11.21.2022

It was a great time to see the CXL consortium co-chair (AMD/Intel) at SC'22. Panel meeting (with Microsoft, Intel, LBNL) was also excellent in bringing up all the issues that high-performance computing (HPC) need to address in order to have CXL. In the distinguished lecture, we successfully demonstrated the entire design of the CXL switch and a CXL 3.0 system integrating true composable architecture. In particular, we showed up a new opportunity to connect all heterogeneous computing systems and HPC (having multiple AI vendors and data processing accelerators) and integrate them into a single pool. In addition, we brought what CXL 3.0 can do from a rack-scale interconnect technology (back-invalidation, cache coherence engine, fabric architecture with CXL, etc.) We hope that there is a chance to share more about our vision and CXL 3.0 prototypes at somewhere venues in the near future!

We will have the Opening Distinguished Lecture, introducing the future of CXL at RESDIS of SC'22 (Dallas)!

09.30.2022

Compute express link (CXL) has recently attracted significant attention thanks to its excellent hardware heterogeneity management and resource disaggregation capabilities. Even though there is yet no commercially available product or platform integrating CXL 2.0/3.0 into memory pooling, it is expected to make memory resources practically and efficiently disaggregated much better than ever before. In this lecture, we will check why existing computing and memory resources require a new interface for cache coherent and show up how CXL can put the different types of resources into a disaggregated pool. As a use case scenario, this lecture will show two real system examples, building a CXL 2.0-based end-to-end system straight connects a host processor complex and remote memory resources over CXL's memory protocol and a CXL-integrated storage expansion system prototype. At the end of the lecture, we also plan to introduce a set of hardware prototypes designed to support the future CXL system (CXL 3.0) as our ongoing project. We love to see you all in Texas, Dallas coming November!.

Check more: [ Lecure info. ] [ Program ]

Miryeong won the best paper award of Samsung, this year!

08.26.2022

Our team (Miryeong, Seungjoon, and Hyunkyu -- Miryeong is the first author) has won the Best Paper Award from Samsung for their paper "Vigil-KV: Hardware-Software Co-Design to Integrate Strong Latency Determinism into Log-Structured Merge Key-Value Stores". This work Vigil-KV, a hardware and software co-designed framework that eliminates long-tail latency almost perfectly by introducing strong latency determinism. To make Get latency deterministic, Vigil-KV first enables a predictable latency mode (PLM) interface on a real datacenter-scale NVMe SSD, having knowledge about the nature of the underlying flash technologies.

Vigil-KV at the system-level then hides the non-deterministic time window (associated with SSD's internal tasks and/or write services) by internally scheduling the different device states of PLM across multiple physical functions. Vigil-KV further schedules compaction/flush operations and client requests being aware of PLM’s restrictions thereby integrating strong latency determinism into LSM KVs. We prototype Vigil-KV hardware on a 1.92TB Datacenterscale NVMe SSD while implementing Vigil-KV software using Linux 4.19.91 and RocksDB 6.23.0. To the best of our knowledge, this is the first work that implements the PLM interface in a real SSD and makes the read latency of LSM KVs deterministic in a hardware-software co-design manner. We evaluate six Facebook and Yahoo scenarios, and the results show that Vigil-KV can reduce the tail latency of a baseline KV system by 3.19× while reducing the average latency on Get services by 34%, on average. Miryeong's Vigil-KV work takes the top among all the candidates this year. She also got $5000 cash prize. Congratulations!

Read more: [ Paper ] [ Video ] [ KAIST EE News (Korean) ] [ KAIST EE News (English) ]

CAMEL is invited by and will demonstrate two CXL platforms at the CXL Forum 2022

07.22.2022

CAMEL team is invited to demonstrate two hot topics about CXL memory disaggregation and storage pooling at CXL Forum 2022, which is the hottest session at Flash Memory Summit (led by the CXL Consortium and MemVerge). That deals with CXL updates from the CXL Consortium, Korea Advanced Institute of Science and Technology (KAIST), ARM, Astera Labs, Elastics.cloud, Hazelcast, Kioxia, Lenovo, Montage, NGD Systems, PhoenixNAP, Rambus, Smart Modular, Synopsys, University of Michigan TPP team, and Xconn. Our sessions will start on August 2nd, 4:40PM PT.

In the first session, entitled "CXL-SSD: Expanding PCIe Storage as Working Memory over CXL", we will argue that CXL is helpful in leveraging PCIe-based block storage to incarnate a large, scalable working memory. CXL could be a cost-effective, and practical interconnect technology that can bridge PCIe storage’s block semantics to memory-compatible byte semantics. To this end, we should carefully integrate the block storage into its interconnect network by being aware of the diversity of device types and protocols that CXL supports. This talk first discusses what mechanism makes the PCIe storage impractical and unable to be used for a memory expander. Then, it will explore all the CXL device types and their protocol interfaces to answer which configuration would be the best for the PCIe storage to expand the host’s CPU memory. In the second session, we will demonstrate our CXL 2.0 end-to-end system, including the CXL network and memory expanders. You can check more detailed information about who will be in the CXL forum and how to register to attend via Zoom through the given below link.

Check more: [ Full Program ] [ Register ]

Miryeong won the NVMW memorable paper award this year!

07.21.2022

Our team (Miryeong, Donghyung, Sangwon -- Miryeong is the first author) has won the Memorable Paper Award from NVMW 2022 for their paper "HolisticGNN: Geometric Deep Learning Engines for Computational SSDs". This work deals with in-storage processing for large-scale GNN (graph neural network) using a novel computational SSD (CSSD) architecture and machine learning framework. Basically, it performs preprocessing of GNN in storage like near data processing (including sampling, graph conversion, etc.) and accelerates inference procedures over reconfigurable hardware. The team fabricates HolisticGNN’s hardware RTL and implements its software on an FPGA-based CSSD as well.

The NVMW memorable paper award is one of the prestige awards in non-volatile memory areas. It selects two papers published in the past two years in TOP-TIER venues and journals such as OSDI, SOSP, FAST, ISCA, MICRO, ASPLOS, and ATC. Among them, NVMW committee members not only examine the quality of all the top venue papers and corresponding presentations but also check the significant impact on non-volatile memory research fields. NVMW (founded in 2010) is a non-volatile memory workshop held annually by the Center for Memory and Recording Research (CMRR) and Non-Volatile Systems Laboratory (NVSL). For the past 13 years, there have been nine NVMW memorable paper awards. Miryeong's HolisticGNN work takes the top among all the candidates this year, and it is the first award that KAIST has achieved. She also got $1000 cash prize. Congratulations! You can check the history of all past winners here all the memorable paper award winners.

Our CXL 2.0-based Memory Expander and End-to-End System are introduced by the Next Platform’s headline

07.20.2022

The hyperscalers and cloud builders are not the only ones having fun with the CXL protocol and its ability to create tiered, disaggregated, and composable main memory for systems. HPC centers are getting in on the action, too, and in this case, the nextplatform is specifically talking about the Korea Advanced Institute of Science and Technology.

Researchers at KAIST’s CAMELab have joined the ranks of Meta Platforms (Facebook), with its Transparent Page Placement protocol and Chameleon memory tracking, and Microsoft with its zNUMA memory project, is creating actual hardware and software to do memory disaggregation and composition using the CXL 2.0 protocol atop the PCI-Express bus and a PCI-Express switching complex in what amounts to a memory server that it calls DirectCXL. The DirectCXL proof of concept was talked about it in a paper that was presented at the USENIX Annual Technical Conference last week, in a brochure that you can browse through here, and in a short video. (This sure looks like startup potential to the nextplatform .) -- Timothy Prickett Morgan

Read more: [ Headline ]

We have four studies that will be demonstrated at Hot series venues (HotChips and HotStorage)

06.20.2022

We have four research works that have been accepted from HotStorage’22 and HotChips34. The topics that our study deal with include i) CXL-enabled storage-integrated memory expanders (CXL-SSD), ii) an advanced system stack for high-performance ZNS, iii) a scalable RAID system for next-generation storage, and iv) large-scale GNN services through computational SSD and in-storage processing architecture. The works have been done by Miryeong Kwon, Donghyun Gouk, Hanyeoreum Bae, and Jiseon Kim. Congratulation! We are excited to see you all at HotStorage and HotChips this year! Please see the detail in our publication list [Publications]. The corresponding papers and slides will be updated soon.

Donghyun and Sangwoon's memory pooling with compute express link (CXL) has been accepted from USENIX ATC'22

04.30.2022

New cache coherent interconnects such as CXL have recently being attracted great attention thanks to their excellent capabilities of hardware heterogeneity management and resource disaggregation. Even though there is yet no real product or platform integrating CXL into memory disaggregation, it is expected to make memory resources practically and efficiently disaggregated much better than ever before.

In this paper, we propose direct accessible memory disaggregation, DirectCXL that directly connects a host processor complex and remote memory resources over CXL’s memory protocol (CXL.mem). To this end, we explore several practical designs for CXL-based memory disaggregation and make them real. As there is no operating system that supports CXL, we also offer CXL software runtime that allows users to utilize the underlying disaggregated memory resources via sheer load/store instructions. Since DirectCXL does not require any data copies between the host memory and remote memory, it can expose the true performance of remote-side disaggregated memory resources to the users. This year, the acceptance rate of USENIX ATC is 16%. Congratulations!!

Miryeong and Seungjoon KVS SSDs with strong latency determinism has been accepted from USENIX ATC'22

04.30.2022

We propose Vigil-KV, a hardware and software co-designed framework that eliminates long-tail latency almost perfectly by introducing strong latency determinism. To make Get latency deterministic, Vigil-KV first enables a predictable latency mode (PLM) interface on a real datacenter-scale NVMe SSD, being aware of the nature of the underlying flash technologies. Vigil-KV at the system-level then hides the non-deterministic time window (being associated with SSD internal tasks and/or write services) by internally scheduling the different device states of PLM across multiple physical functions. Vigil-KV further schedules compaction/flush operations and client requests being aware of PLM's restrictions thereby integrating strong latency determinism into LSM KVs. We implement Vigil-KV upon a 1.92TB NVMe SSD prototype and Linux 4.19.91, but other LSM KVs can adopt its concept. We evaluate diverse Facebook and Yahoo scenarios with Vigil-KV, and our evaluation shows that Vigil-KV can reduce the tail latency of a baseline KV system by 3.19x while reducing the average latency by 34%, on average.

This year, the acceptance rate of USENIX ATC is 16%. Congratulations!!

CAMEL's Large-Scale Computatioanl SSD Research Has Been Awarded from IITP

04.16.2022

CAMEL’s computational SSD research project is just awarded by the Institute of Information & Communications Technology Planning & Evaluation (IITP). The research will expose the practical but fundamental limits of the computational storage model, including the concept of Near-Data Processing (NDP), Smart SSD, and in-storage processing (ISP), and explore why computational SSDs have not been adopted in the industry by far. The research project will also suggest a breaking-through model and solutions that make computational SSDs be deployed in many computation domains ranging from enterprise-scale to cloud computing to data-centers.

This project includes the development of actual storage cards, hardware RTL, firmware, and large-scale I/O-centric operating systems, enabling a new model of generic computational SSDs. $5M (USD) solely coming from IITP will in total support CAMEL’s intelligent flash and computational storage projects for the next three years.

CAMEL uncovers The World-first CXL-based Memory Disaggregation IPs

03.05.2022

As the big data era arrives, resource disaggregation has attracted significant attention thanks to its excellent scale-out capability, cost efficiency, and transparent elasticity. Disaggregating processors and storage devices well does break the physical boundaries of data centers and high-performance computing into separate physical entities. In contrast to the other resources, it is non-trivial to achieve a memory disaggregation technique that supports high performance and scalability with low cost. Many industry prototypes and academic simulation/emulation-based studies explore a wide spectrum of approaches to realize such memory disaggregation technology and put significant efforts into making memory disaggregation practical. However, the concept of memory disaggregation has not been successfully realized by far due to several fundamental challenges.

CAMEL has prototyped the world-first CXL solution (POC) that directly connects a host processor complex and remote memory resources over computing express link (CXL) protocol. CAMEL’s solution framework includes a set of CXL hardware and software IPs, including CXL switch, processor complex IP, and CXL memory controller. The solution framework can completely decouple memory resources from computing resources and enable high-performance, fully scale-out memory disaggregation architecture.

Read more: [ White paper ]

Our Hardware and Software Co-Design for Energy-Efficient Full System Persistence is accepted by ISCA'22

03.03.2022

LightPC work argues that there is a better way to use PRAM than what Intel's Optane Persistent Memory solution uses. We implement a real persistent system and OS, which put all pure NVM DIMMs, NVM controllers, and RISC-V-based 03 octa-core CPU altogether. LightPC has NO volatile states at the runtime (e.g., DRAM) and guarantees that the target system doesn't lose any multi-threaded program execution, data in the heal and stack. In addition, you don't need to change anything on your application

ABSTRACT: We propose LightPC, a lightweight persistence-centric platform to make the system robust against power loss. LightPC consists of hardware and software subsystems, each being referred to as open-channel PMEM (OC-PMEM) and persistence-centric OS (PecOS). OC-PMEM removes physical and logical boundaries in drawing a line between volatile and non-volatile data structures by unshackling new memory media from conventional PMEM complex. PecOS provides a single execution persistence cut to quickly convert the execution states to persistent information in cases of a power failure, which can eliminate persistent control overhead. We prototype LightPC's computing complex and OC-PMEM using our custom system board. PecOS is implemented based on Linux 4.19 and Berkeley bootloader on the hardware prototype. Our evaluation results show that OC-PMEM can make user-level performance comparable with a DRAM only non-persistent system, while consuming 72% lower power and 69% less energy. LightPC also shortens the execution time of diverse HPC, SPEC and In-memory DB workloads, compared to traditional persistent systems by 1.6x and 8.8x, on average, respectively.

Our Hardware/Software Co-Programmable Framework for Computational SSDs to Accelerate GNNs is accepted by FAST'22

12.11.2021

Graph neural networks (GNNs) process large-scale graphs that consist of a hundred of billion edges. In contrast to traditional deep learnings, the unique behaviors of GNNs are engaged with a large set of graph and embedding data on storage, which exhibits complex and irregular preprocessing.

We propose a novel deep learning framework on large graphs, HolisticGNN that provides easy-to-use, near-storage inference infrastructure for fast, energy efficient GNN processing. To achieve the best end-to-end latency and high energy efficiency, HolisticGNN allows users to implement various GNN algorithms where the actual data exist and executes them directly from near storage in a holistic manner. It also enables RPC over PCIe such that the users can simply program GNNs through a graph semantic library without understanding the underlying hardware or storage configurations at all. We fabricate HolisticGNN's hardware RTL and implement its software on our FPGA-based computational SSD (CSSD). Our empirical evaluations show that the inference time of HolisticGNN outperforms GNN inference services using high-performance modern GPUs by 7.1× while reducing energy consumption by 33.2×, on average. This year, the acceptance rate of fast is 21%. For the entire history of KAIST, there are only three papers published by USENIX FAST, and we have all of them!! Congratulations!

Read more: [ News at KAIST ] [ Naver headline + 26 ] [ Press/Newspaper ]

Our PMEM-based in-memory graph study has been accepted from ICCD'21

08.20.2021

In this work, we investigate runtime environment characteristics and explore the challenges of existing in-memory graph processing. This system-level analysis includes results and observations, which are not reported in the literature with existing expectations of graph application users. To address a lack of memory space problem for big-scale graph analysis, we configure real persistent memory devices (PMEMs) with different operation modes and system software frameworks. Specifically, we introduce PMEM to a representative in-memory graph system, Ligra, and perform an in-depth analysis that uncovers the performance behaviors of different PMEM-applied in-memory graph systems. Based on the results, we also modify Ligra to improve the graph processing performance and data persistency. Our evaluation results reveal that Ligra, with our simple modification, exhibits 4.41× and 3.01× better performance than the original Ligra running on a virtual memory extension and conventional persistent memory.

Jie's optical network-based new memory for GPU has been accepted from MICRO'21

07.15.2021